Robocup Regionals!

I took the robot home overnight to install the necessary packages such as NumPy, SciPy, Matplotlib, OpenCV and ffmpeg.

On the day, we got off to a great start, being super prepared with a fully functioning robot – we wish. There’s still a whole bunch of stuff we needed to do – and on top of that, we were still missing a Li-Po, solder and heatshrink tubing. Since Mr Crane was going back to school anyway to get some stuff, we gave him a list of stuff that we still needed, or forgot. As well as the batteries, solder and heatshrink, we asked him to get one of the Raspberry Pi 2s so we can start configuring our robot to work using a Raspberry Pi 2, which will provide more frames per second when streaming over the 900MHz/Hz radio reciever.

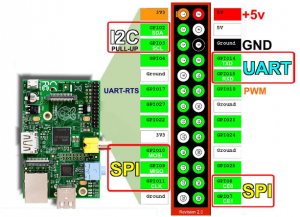

The Raspberry Pi GPIO pin layout diagram featured below is what we will use to connect all the necessary components to the Raspberry Pi so the robot can function correctly.

Although the diagram features a Raspberry Pi 1, the pin layouts should be relatively the same.

Since our robot was unfit for any sort of competition, we instead passed the time researching ways to improve the robot’s design and recompiling OpenCV, which, on the Raspberry Pi takes an extremely long time. Because of this we tried to use our Core 2 Duo Ubuntu laptop to compile OpenCV for the Raspberry Pi. It was a lot faster, but all the file paths were set to the Ubuntu machine. Matthew and I took the robot home for the night and compiled OpenCV, leaving the Raspberry Pi running through the night. See the subtitle “Afternoon” for more information on that.

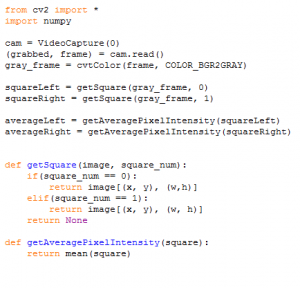

Without OpenCV, our robot was basically blind. Even so, we weren’t going to sit around all day and do nothing, so we started working on the line following script. Line following involved the robot trying to identify whether it should follow the line forward or left or right. We first looked into how to visually detect the line, as we believed that could be the secret to following the line. We found a previous competitor of RoboCup Junior, who had his own method of detecting the line using OpenCV with a fixed camera. He detected the line and used some algorithms to determine the slope of the line and whether to turn left or right. After looking into this approach we decided it would be difficult and unnecessary. Instead we came up with our own. Ryan wrote an algorithm to describe the method of how to do this. He also looked into cropping and getting the mean using the “mean” method in OpenCV. After this Ryan wrote the algorithm and started work on the actual script. The algorithm explained:

First, the robot checks two squares it created of the image it just took of the line. The robot will grayscale the image and will then find the average pixel shade intensity, and using that determine whether both are looking at white, one black one white, both black, one black one green, both green. Using these combinations, it can determine whether it should be going forward, turning or following the green squares.

Afternoon

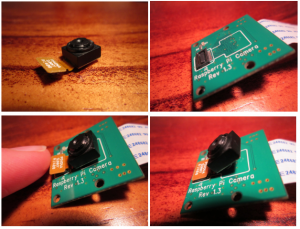

Not much could be accomplished on the day in regards to software/installing OpenCV due to the lack of an internet connection, so I continued installing and updating the Raspberry Pi at home, where an (albeit very slow) internet connection exists. During this time, I made an important discovery. At RoboCup, the team noticed the lens/shutter/sensor box (from now on referred to as just the “lens”) was very loose. The lens later fell off, but I found that it can be simply clipped back in. The lens should probably be hot-glued onto the board to prevent the lens from falling off again and getting lost. Until then, when removing the camera from the robot, you should push on the lens, not pull on the board.

Where to from here?

With RoboCup Regionals complete (And yes, we didn’t even compete) (that rhymes, maybe we should go into poetry!) we march on towards Germany and all the problems it may bring. As a team, we have decided to skip the expensive trip to the Nationals competition. After all, we’ve already been invited back to the International competition in Germany. Our first order of business has to be the new chassis but because of some very inconvenient 3D printer problems (See the blog titled “Paper Jam” below), we are going to have to wait at least a week before we can print of the new chassis. After that, it becomes a coding issue. Fortunately, we have already created most of the code that we will be using.