Hi, it’s Anthony again.

I’m here to make an addition to the first part of this series, which really belongs in that blog post, but it was getting too long, so I decided to make it into its own. If you haven’t read it, you can read part 1 here:

https://www.sfxrescue.com/developmentupdates/day-195-hazmat-part-1-detecting-the-danger/

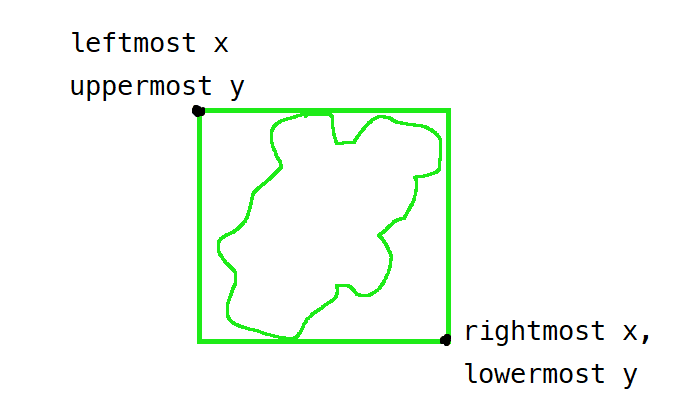

After a contour has been detected, it needs to be sliced out of the original image and stored in a separate variable before it can be classified. This is done using numpy’s array region functionality.

We need to iterate over each point in the contour, and find the smallest and largest x and y coordinates so that we can find this bounding box, as in the image above. Then, we can use the numpy array syntax for taking a slice of an array, arr[x1:x2, y1:y2], which equals a new image with upper left coords (x1,y1) and lower right coords (x2,y2). This can be done with the following code:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 | def region(image, contour): # make sure the inputted contours are an array of points # in the form [[x, y], [x, y], [x, y]....] for the following code to work. beware that # most contour detection functions in opencv store the points in two layers of # array, like [[[x,y]], [[x,y]], [[x,y]], [[x,y]].....] # default the bounding boxes to be the x and y coordinates of the first point in the contour leftmost = contour[0][0] rightmost = contour[0][0] uppermost = contour[0][1] lowermost = contour[0][1] # continue to replace them if we find a better one while looking at each point for point in contour: if point[0] < leftmost: leftmost = point[0] if point[0] > rightmost: rightmost = point[0] if point[1] < uppermost: uppermost = point[1] if point[1] > lowermost: lowermost = point[1] # return a new image which is only the slice we found return image[uppermost:lowermost, leftmost:rightmost] |